Beyond the Hype: Why Your Biggest AI Problem Isn't Technology. It's Trust

This post is inspired by the episode, AI has a PR problem of the AI Daily Brief.

The Canary in the Coal Mine: A Crisis of Trust

Our recent episode of the AI Daily Brief podcast highlighted a critical, yet often overlooked, barrier to AI adoption: a deep and growing trust deficit. The 2024 Edelman Trust Barometer, a comprehensive study of over 32,000 respondents across 28 countries, delivered a stark message to enterprise leaders. In the United States, rejection of AI outweighs enthusiasm by a factor of nearly three to one, with 49% of Americans saying they reject the growing use of AI, compared to just 17% who embrace it. [1] This isn't just public opinion; it's a direct reflection of the anxieties felt within the enterprise itself. Employees are not passive observers in this transformation; they are the key to unlocking AI's potential, and right now, they are deeply skeptical.

From Public Fear to Enterprise Friction: The Strategic Implications

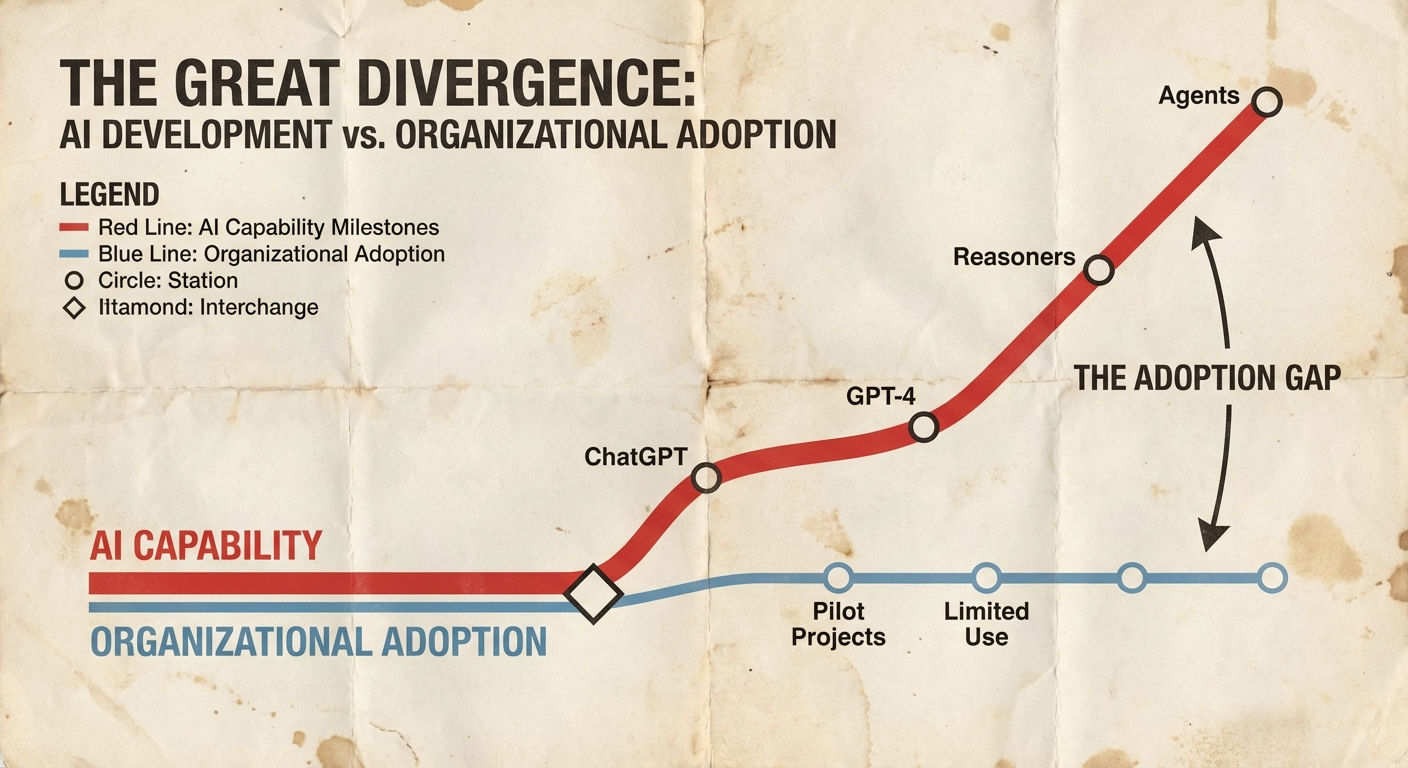

The Edelman study reveals a chasm between the optimism of the AI community and the reality on the ground. While 54% of people in China embrace AI, 49% in the U.S. reject it, and similar patterns emerge across Western Europe. [1] This isn't an abstract cultural difference; it's a direct threat to enterprise AI initiatives. The reasons are clear and actionable. Seven in ten Americans believe business leaders are not being honest about using AI to eliminate jobs. [1] This fear is not unfounded. Research from McKinsey shows that while 92% of companies plan to increase their AI spending over the next three years, only 1% have achieved full AI maturity. [2] The gap between investment and value is precisely where trust erodes.

This trust deficit manifests in several critical ways for enterprise leaders. The primary hurdles to enterprise AI adoption are not technical; they are human. McKinsey reports that 41% of employees are apprehensive about AI's impact on their work. [3] This apprehension breeds resistance, slows adoption, and sabotages ROI. Without a foundation of trust, new AI tools are viewed with suspicion, not excitement, making it nearly impossible to get the buy-in needed to move beyond small-scale pilots. The companies that win with AI will be those that build a culture of trust and transparency. Those that don't will face a disengaged workforce and an inability to adapt to the disruptions AI will inevitably bring.

The Questions Every Executive Team Should Be Debating

If the trust deficit is the silent killer of AI initiatives, how can you diagnose your organization's health? These are the questions that separate the prepared from the perplexed:

- Do we have a clear, honest, and openly communicated strategy for how AI will augment our workforce, not just replace it? The Edelman study found that 59% of U.S. respondents said their enthusiasm for AI would increase if they felt sure their employer was using AI to increase productivity versus eliminate jobs. [1]

- Are we investing as much in our people, through training, upskilling, and change management, as we are in the technology itself? Fifty-seven percent of U.S. respondents said high-quality training would increase their enthusiasm. [1]

- Is our leadership team aligned on the ethical guardrails and governance required to build and maintain trust in our AI systems? Organizations that don't address inaccuracy and explainability head-on will struggle to scale.

- How are we measuring the success of our AI initiatives beyond technical metrics? McKinsey's research shows that only 39% of organizations report enterprise-level EBIT impact from AI. [2] The gap is often in the human factors.

- Do we have an objective assessment of our AI readiness, or are we relying on internal biases and vendor hype?

Building the Bridge from Fear to Forward Momentum

Answering these questions requires a shift in mindset, from viewing AI as a technology to be implemented to seeing it as a strategic transformation to be led. In our work with enterprise clients, we've observed a clear pattern: organizations that succeed don't just buy technology; they build a strategic framework first. They understand that a successful AI strategy is built on a foundation of trust, transparency, and a deep understanding of their own organizational readiness.

This is the philosophy we've codified into our AI Readiness Audit, a diagnostic process designed to provide the objective clarity that leadership teams need. It's not about a score; it's about a roadmap. It's about identifying the gaps in your strategy, your data, your talent, and your culture before they become insurmountable roadblocks.

Your AI Journey is Unique. Your Roadmap Should Be Too.

Every organization's journey with AI is unique. If the questions and challenges discussed in this post resonate with your team, we welcome a conversation. We read all our emails-ping me at danv@besuper.ai. Our goal is to help leaders build a clear, actionable roadmap that addresses not just the technical challenges of AI, but the human and organizational ones as well. To learn more about how a structured assessment can de-risk your AI investment and accelerate your path to value, you can explore our approach at besuper.ai or reach out to our team to discuss your specific situation.

---

References

[1] Edelman. (2024). 2024 Edelman Trust Barometer. Retrieved from https://www.edelman.com/trust/2024/trust-barometer

[2] McKinsey & Company. (2025). The State of AI: Global Survey 2025. Retrieved from https://www.mckinsey.com/capabilities/quantumblack/our-insights/the-state-of-ai

[3] McKinsey & Company. (2024). The state of AI in early 2024: Gen AI adoption spikes and starts to generate value. Retrieved from https://www.mckinsey.com/capabilities/quantumblack/our-insights/the-state-of-ai-2024

This post is based on AI has a PR problem from AI Daily Brief.